Thank you for helping to

Keep the conversation going

Here are some ways we can help you.

We hope you enjoyed The Castle Conference: Digital Wellbeing for Young People.

We know you want to keep the conversation going so we have created a page to help you do that.

The page will be updated with slides and videos when they are available.

Watch the Castle Conference Catch Up

We hope that The Castle Conference inspired you, got you thinking and talking about the topic of Digital Wellbeing. We really hope you have been talking about the conference to your friends, family and colleagues.

We certainly are and we arranged it!

The Castle Conference Catch-Up was a chance to keep talking, a chance to share your thoughts, a chance to find out what questions we have been asked since the day and a chance to ask us new questions.

Andy & Lucy

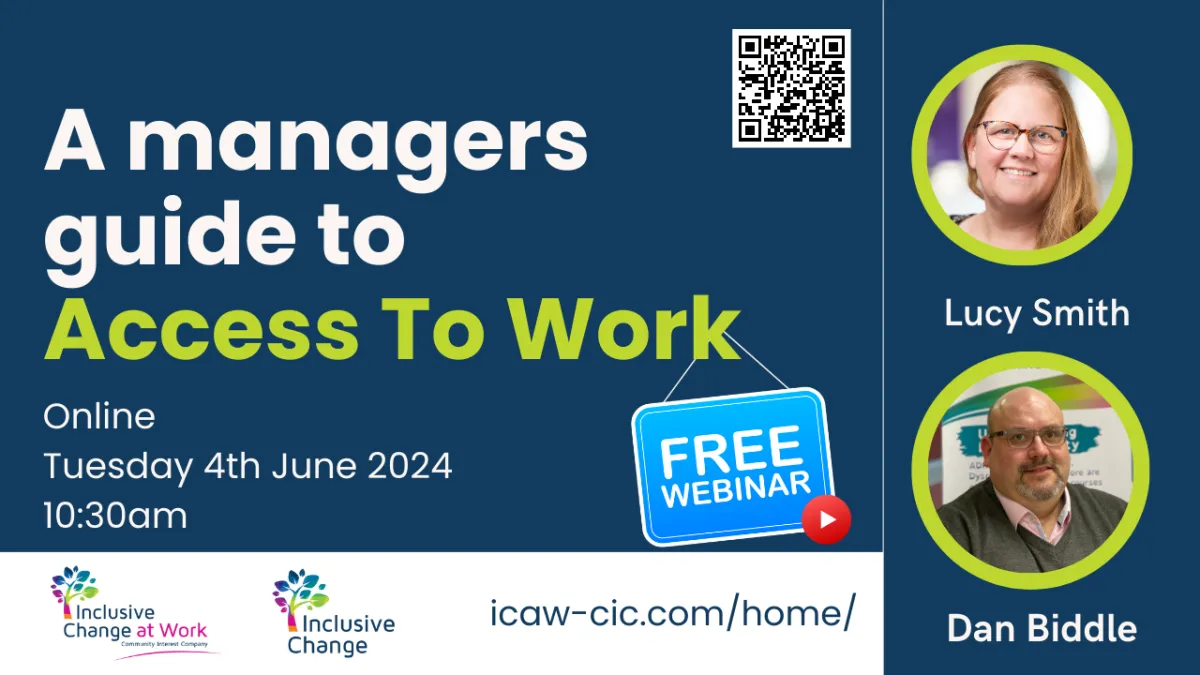

4th June 2024

Free Webinar

Join Lucy & Dan from Inclusive Change At Work CIC who will be discussing how to support disabled and neurodivergent employees to thrive at work.

Recap from the event

We have combined the slides into a video for you to rewatch

This is only the slides - no audio. Videos will be uploaded soon.

Speaker Videos

We will be uploading videos from The Castle Conference as soon as they are available.

COMING SOON!

Resources

Links and downloads for you

AI, HR and Your Risk Register

AI Governance in the Workplace: What HR Leaders Should Be Adding to Their Risk Register

Artificial Intelligence is no longer experimental in UK workplaces. It is screening CVs, drafting performance feedback, summarising investigations and influencing workforce decisions in ways that are often invisible to the people affected by them.

Yet in many organisations, AI still sits under digital transformation or IT strategy.

But is that a risk?

When AI intersects with recruitment, performance management or disciplinary processes, it moves directly into the territory of employment law, data protection and organisational governance. HR leaders are not simply stakeholders, they are central to a change strategy.

Why AI in HR Is a Governance Issue

Under the Equality Act 2010, employers remain responsible for discriminatory outcomes, regardless of whether a decision is made by a manager or influenced by software.

If an AI recruitment system indirectly disadvantages candidates with protected characteristics - including disability - liability rests with the organisation. The fact that the bias may be embedded in training data or algorithm design does not remove employer accountability.

The CIPD has been clear that AI in recruitment must be implemented responsibly, with transparency, oversight and consideration of fairness and inclusion. The professional body recognises that introducing AI into people processes is not a simple efficiency upgrade. It requires policy alignment and governance maturity.

Alongside discrimination risk sits data protection risk. The UK GDPR and Data Protection Act 2018 require lawful processing, transparency and safeguards where automated systems significantly affect individuals. If a candidate or employee asks how a decision was reached, organisations must be able to provide a meaningful explanation. Informal assurances are not enough. Documentation and oversight are expected.

Employment Tribunal Risk Is Increasing

Tribunals focus on fairness and impact.

If AI contributes to a rejected application, redundancy scoring, disciplinary outcome or performance dismissal, the organisation must demonstrate that the overall process was proportionate, unbiased and subject to meaningful human review.

Disability discrimination claims are particularly exposed. Neurodivergent traits may be misinterpreted by automated systems as performance concerns or behavioural risk. Where no structured consideration of reasonable adjustments has taken place, organisations may find themselves defending avoidable claims.

Awards can include compensation for financial loss and injury to feelings, alongside reputational damage and leadership time spent managing proceedings.

This is not theoretical. Legal advisers are already scrutinising algorithmic influence in employment disputes.

The Less Visible Risk: Trust, Capability and Culture

There are also other unintended organisational risks.

When AI tools are introduced without clear communication or defined boundaries, employees may experience uncertainty about surveillance, fairness or job security. For neurodivergent staff in particular, opaque systems and unpredictable change can increase cognitive load and anxiety.

Over time, this can show up as disengagement, grievance, attrition or reduced psychological safety.

There is also a longer-term workforce issue. Heavy reliance on generative AI for drafting and analysis may weaken critical thinking development in early career employees. Productivity may increase in the short term, while capability erodes gradually.

Governance is not simply about avoiding claims. It is about sustaining organisational resilience.

What HR Leaders Should Include on the AI Risk Register

If AI is being used in recruitment, performance or workforce analytics, it should appear explicitly on the organisational risk register.

At a minimum, HR leaders should consider including:

Clear executive ownership of AI oversight. Who is accountable for workforce impact, not just technical implementation?

An approved AI tools policy. Which systems are authorised for use in HR processes, and under what conditions?

Equality and bias impact assessment. Has the organisation tested whether the tool disproportionately disadvantages protected groups?

Data Protection Impact Assessments. Where personal data is processed, particularly in automated decision-making, has a DPIA been completed and documented?

Human review checkpoints. Where in the process is meaningful human judgement applied before decisions are finalised?

Alignment with recruitment and disciplinary policies. Do existing policies reflect AI-enabled processes, or are they silent on automated input?

Employee challenge mechanisms. Can candidates or employees request human review of AI-influenced decisions?

Manager training. Do managers understand the limitations of the tools they are using, and their continued accountability?

This does not require abandoning innovation. It requires treating AI as part of core organisational infrastructure - subject to the same scrutiny as finance, safeguarding or health and safety.

Moving From Experimentation to Accountability

AI will continue to evolve rapidly. Organisations that treat it informally may only discover governance weaknesses when a complaint or claim arises.

Those that integrate AI into formal risk management processes are more likely to embed it in a way that strengthens fairness, capability and trust.

For HR leaders, the strategic question is not whether AI should be used. It is whether its use is structured, lawful and aligned with organisational responsibility.

HR leaders who address AI governance early will reduce avoidable risk and strengthen confidence in how decisions are made.

FAQs: AI Governance and Employment Risk in the UK

Can an employer be taken to tribunal for AI-based recruitment decisions?

Yes. If AI influences recruitment decisions and results in discrimination, unfair process or failure to make reasonable adjustments, the employer can face an employment tribunal claim. Under the Equality Act 2010, liability rests with the organisation, even if a third-party AI tool was used.

Does the Equality Act 2010 apply to AI in recruitment?

Yes. The Equality Act 2010 applies to all recruitment and employment decisions, regardless of whether they are made by a person or supported by software. If an AI system indirectly disadvantages candidates with protected characteristics, the employer may be liable unless the practice can be objectively justified.

Does UK GDPR apply to AI tools used in HR?

Yes. If AI systems process personal data, they fall within the scope of UK GDPR and the Data Protection Act 2018. Organisations must ensure lawful processing, transparency and appropriate safeguards, particularly where automated decision-making significantly affects individuals.

What is automated decision-making under UK GDPR?

Automated decision-making refers to decisions made solely by automated means, without meaningful human involvement, that have legal or similarly significant effects on individuals. In some circumstances, individuals have the right to request human review of such decisions.

How can AI create discrimination risk in recruitment?

AI systems are often trained on historical data. If that data reflects existing workplace bias, the system may replicate or amplify those patterns. This can lead to indirect discrimination against candidates with protected characteristics, including disabled or neurodivergent applicants.

What are the risks of using AI in performance management?

AI tools used in performance scoring or behavioural analysis may misinterpret communication differences, working styles or neurodivergent traits. Without human oversight and reasonable adjustment frameworks, this can increase discrimination risk and undermine fairness in capability processes.

Should AI be included on an HR risk register?

Yes. If AI is used in recruitment, performance management, workforce analytics or disciplinary processes, it should appear explicitly on the organisational risk register. Governance should include oversight responsibility, bias assessment, data protection impact assessments and documented human review.

Who is responsible for AI governance in an organisation?

While IT teams may manage implementation, responsibility for workforce impact and legal compliance typically sits with executive leadership and HR. Clear accountability at senior level is essential.

How can organisations reduce AI-related employment risk?

Organisations can reduce risk by completing equality and data protection impact assessments, documenting human oversight, updating HR policies to reflect AI use, training managers and ensuring individuals can challenge or request review of AI-influenced decisions.

Get Your FREE eBook

A Practical guide to Simplify your Digital Life

Digital Safety CIC - Online Resources

Exhibitor Information

We think you will agree that our exhibitors contributed so much to the event.

We know that we can tackle the problems we face alone - which is why we love working with others.

Thank you - you made the day INCREDIBLE!

Inclusive Change At Work CiC

Bradbury House

Wheatfield Road

Bradley Stoke

Bristol

BS32 9DB

Companies House: 13271923

ICO registration: ZZB293922

UK register of Learning providers

UKRLP: 10090653

Privacy Policy | Terms and Conditions

Copyright © 2024 Inclusive Change At Work CiC | All Rights Reserved

LinkedIn